AI rules and realities

Otago's new Centre for Artificial Intelligence and Public Policy is taking a broad-based look at policy, ethics and governance issues associated with the ever-increasing application of AI.

It is easy to think of AI in terms of a driverless car that may soon be coming down a street near you. But the reality is we are already rubbing up against AI every day without even realising it, in the form of algorithms which are used to do tasks ranging from shaping decisions in policing, social support and insurance, or to driving Twitter and Facebook feeds.

University of Otago minds are already delving into the social impact of AI through several research initiatives including the AI and Society Research Group, the Centre for Law and Emerging Technologies, and the Law Foundation-funded project Artificial Intelligence and Law in New Zealand which is examining law and public policy implications.

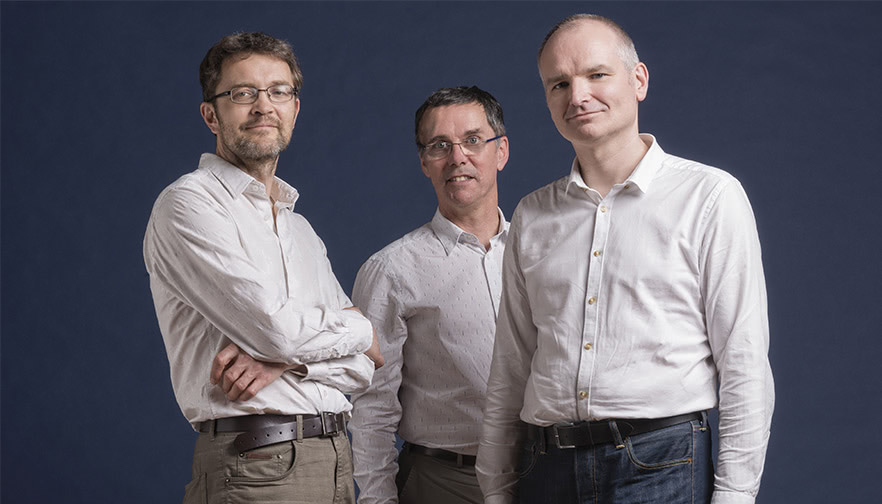

Associate Professor Ali Knott, Professor James Maclaurin and Professor Colin Gavaghan: "One of the reasons we set up CAIPP was that a lot of people – particularly those in government departments – were signalling that they want New Zealand to be getting this right."

Photo: Alan Dove

But this work is being taken even further through the University's recently formed Centre for Artificial Intelligence and Public Policy (CAIPP) - Te tari Rorohiko Atami, Kaupapa Here Tūmatanui. Led by co-directors Professor James Maclaurin (Philosophy) and Professor Colin Gavaghan (Law) it brings together wide-ranging expertise to explore policy, regulation, ethics and governance associated with AI.

Maclaurin says CAIPP members come from a range of areas, including computer science, economics, politics, social work and linguistics, and have been collaborating for several years already.

A recent memorandum of understanding means the centre has begun working with government ministries and Crown entities to examine how they use AI in the workplace and to support decision-making, with a view to setting up an ethical framework.

"The work we've been doing up until now has examined how government uses artificial intelligence, particularly in domains like criminal justice – but also slightly-related domains like social work and social welfare.

"One of the reasons we set up CAIPP was that a lot of people ¬– particularly those in government departments – were signalling that they want New Zealand to be getting this right."

Maclaurin has also been appointed as the Royal Society Te Apārangi representative on the six-person Australian Council of Learned Academics Expert Working Group, examining AI in Australia.

He hopes CAIPP will become a resource for anybody looking at such technologies and the steps they need to put in place.

"One of the things we are finding is that it is very hard to have simple rules that suit all the different uses of artificial intelligence," he adds.

"Having psychologists, economists, sociologists and all sorts of other people in the room is tremendously useful because they spot things others might not know about."

Dr Emily Keddell: "On one hand, you might say human decision-making might be biased, so an algorithm with hundreds of variables is going to be a more accurate indicator of risk. On the other hand, algorithms are only as good as the data fed into them …”

Photo: Graham Warman

A good example is CAIPP steering group member Dr Emily Keddell (Social Work) who studies child protection and family support services, areas where there is increasing use of data and AI algorithms to support decision-making.

"It draws on large interlinked data sets which are now much more available through things like the Integrated Data Infrastructure, a large linked dataset from many sources, managed by StatsNZ," she says.

"Data are fed into these algorithms and it says 'yep, you're high risk', so you get the service – or, 'you're low risk' so you don't get the service.”

Algorithms are also used overseas in child protection decision-making, but Keddell urges caution and says it is important we have clear views of the pros and cons, particularly around accuracy and bias.

"On one hand, you might say human decision-making might be biased, so an algorithm with hundreds of variables is going to be a more accurate indicator of risk. On the other hand, algorithms are only as good as the data fed into them, which might be riven with biases, so the algorithm just reproduces these."

For Keddell, ethical issues are also front of mind when working with CAIPP.

"One of the things we are finding is that it is very hard to have simple rules that suit all the different uses of artificial intelligence."

"Using a risk score might limit the rights of people in ways that are not fair, due to limited accuracy and biased data that over-assign risk to particular groups. On the other hand, it's a high stakes area where children can be really harmed by their parents. So it's important to think our way through the careful ethical conversations we need to have," she says.

"If they are to be used, there are some interesting ways of trying to manage it so that it's an aid to professional judgement, rather than dictating it."

Fellow steering group member Associate Professor Ali Knott (Computer Science), an artificial intelligence researcher, has always been interested in what AI's impact on society is going to be.

"The people building the technology need to step up and be involved in the discussion because there are all sorts of areas they don't know about, such as public policy, politics, or law or economics. We're not experts on those things, but we do know one piece of the puzzle."

In recent years he has become increasingly involved in interdisciplinary discussions about how, and to what extent, AI should be regulated, something he says should be easier with government departments than multinationals.

"Putting regulations in surrounding our own government's use of AI is one way New Zealand can create an appropriate model which may be picked up by other countries," he says.

"We quite like the idea of there being some sort of government body or quasi-government body that oversees all this stuff because, at the moment, revelations about the use of AI in government tend to arrive as kind of scandalous headlines."

In time some of those questions could be asked of commercial operators as well.

Knott says the discussion is also moving into the area of the impact of AI systems on jobs.

"Is New Zealand employment law going to be up to dealing with this? What happens if you sack a person and use a machine instead? There haven't been any rules around this until now."

AI has also found its way into politics with information harvested from social media being used to help candidates tell voters what they want to hear.

"We always knew that newspaper editors were aware of 'if it bleeds it leads': people pay attention to things that are a bit scary or a bit weird - or even cute," says Maclaurin.

"We quite like the idea of there being some sort of government body or quasi-government body that oversees all this stuff because, at the moment, revelations about the use of AI in government tend to arrive as kind of scandalous headlines."

"When you get artificial intelligence and machine learning to drive your Twitter feed and it learns these things too and it learns them much more efficiently – such that all of a sudden your Twitter feed looks very scary and very weird and very cute – and, not long after that, politics starts looking weird and scary and cute," says Maclaurin.

"We're very aware that domains of law, like electoral law, are starting to look very old fashioned. Electoral law is about advertising up to election day and what you can do with your manifesto.

"But you don't meet elections in that way anymore. You meet them through your social media feeds and you don't see a whole manifesto – you see the one thing the party thinks you will find particularly compelling because its software has worked out what you like."

MARK WRIGHTThe 'wild west' of data privacy

Data privacy used to be about what information one party gave to another and what the latter did with it. But now it has as much to do with what might be inferred from things such as key strokes and social media likes – and that is taking it into a grey area.

Data privacy used to be about what information one party gave to another and what the latter did with it. But now it has as much to do with what might be inferred from things such as key strokes and social media likes – and that is taking it into a grey area.

"It's a bit like the wild west," says CAIPP steering group member Associate Professor David Eyers (Computer Science, right).

"You've got all these computing components that have largely been unregulated for quite a long time, and now there is a need to look at all the ethical questions."

There has been no regulatory framework to keep up with the pace of change in computing, particularly around AI, cloud computing and the collection of individual data, and Eyers wants to see accountable systems developed.

"How do you get rid of that big gap between technically-detailed, globally-distributed computing and the fact that it's actually dealing with each of our data? How do you manage that data and where it's going?"

Eyers was involved in the running of a recent Dagstuhl seminar in Europe, bringing together computer scientists, lawyers and public policy experts to discuss a series of topics in this area.

"Computer science has to evolve to increase the accountability of software systems because right now the situation is dire."

"What's been really interesting to see is the change in regulation, like the European Union's General Data Protection Regulation (GDPR), introduced in May, which allows EU citizens to request information about what data are stored, who the data have been given to, and where they have come from.

"This is the first major regulatory tool by which citizens are empowered to ask questions in their terms, such as what Google does with your information."

As a result, most Internet users will have recently had online services sending them information updating their privacy policies.

"Part of the challenge involves gaining consent. How do you, as a user, make informed consent about what these systems are going to be doing with your data?"

Eyers says it is likely most organisations' software hasn't been written to actually track consent, so he is interested in developing an accountability engineering process which runs alongside the main software.

"We can retrofit technologies that do information flow control tracking and provenance tracking on top of existing software so that we don't need to rewrite it all from scratch, or new software can be written with accountability in mind. But whatever we do, we need to get better at building accountable systems."